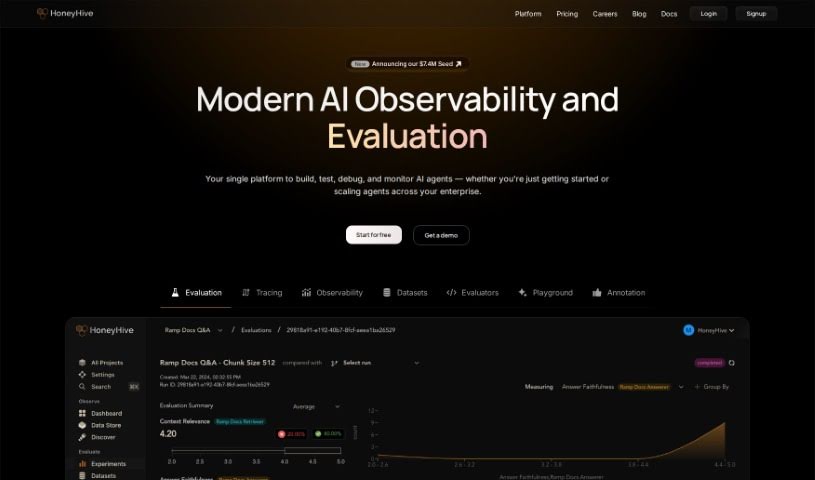

HoneyHive

Discover what HoneyHive is and how to use it effectively in 2025. Explore its features and see how it stacks up against other Software Development Tools.

What is HoneyHive?

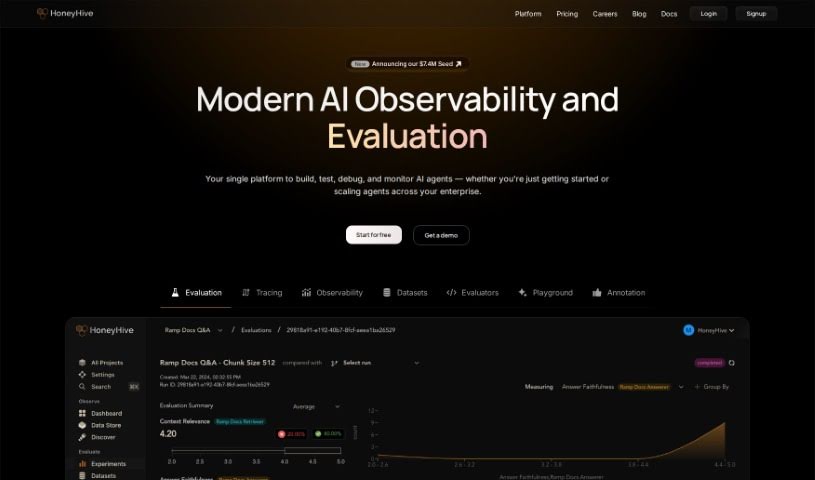

HoneyHive is an AI developer platform built to help teams securely deploy and improve Language and Learning Models (LLMs) when they’re running in production. It is a comprehensive toolkit that works with any model, framework, or environment you’re using. It includes really important tools for monitoring and evaluating your LLM agents, making sure they’re performing well and staying high quality. With HoneyHive, you can confidently roll out LLM-powered products. It offers features for evaluating models offline, keeping an eye on them, collaborating on prompt engineering, getting debugging help, tracking evaluation metrics, and managing your model versions. HoneyHive really focuses on enterprise-grade security, making sure it can scale with your needs, and offers end-to-end encryption. You can host it on their cloud or within your own Virtual Private Cloud (VPC). Plus, they provide dedicated customer support to guide you through your AI development journey.

Who created HoneyHive?

HoneyHive first launched on December 22, 2022, and it was created by Mohak Shah. As an AI developer platform, its main goal is to let teams safely deploy and enhance Language and Learning Models (LLMs) in production environments. It provides essential tools that help teams deploy LLM-powered products with confidence. This includes crucial monitoring and evaluation tools, along with a collaborative toolkit for prompt engineering.

What is HoneyHive used for?

- Curating Data: You can filter and gather datasets directly from your production logs.

- Fine-tuning Models: Export your curated datasets to fine-tune your custom models.

- Active Learning: Build pipelines that use active learning to improve your models.

- LLM Monitoring & Evaluation: Keep track of how your Language and Learning Models (LLMs) are performing and evaluate their quality.

- Product Deployment: Confidently deploy products that are powered by LLMs.

- Prompt Engineering: Work together with your team on prompt engineering.

- Debugging: Get support for debugging complex chains, agents, and RAG pipelines.

- Model Management: Keep your models organized with a model registry and version management system.

- Stack Integration: It’s designed to integrate smoothly with any LLM stack you’re using.

- Pipeline Focus: Offers a pipeline-centric approach, which is great for managing complex chains, agents, and retrieval pipelines.

Who is HoneyHive for?

HoneyHive is a great tool for a variety of roles in the AI and data space:

- Data scientists

- AI developers

- Data engineers

- Machine learning engineers

- Data Analysts

- Engineers in general

- Domain experts

How to use HoneyHive?

Here’s a straightforward guide to using HoneyHive:

- Monitor Your Metrics: Start by using HoneyHive to keep an eye on your application’s performance, how it’s being used, and security metrics. This helps you spot issues early on.

- Evaluate Live: You can conduct live auto-evaluations to quickly find and fix any failures as they happen.

- Get Dashboard Insights: The dashboard gives you a quick overview of all the essential metrics you need.

- Create Custom Charts: Want to track specific metrics? You can build your own charts based on your data queries.

- Slice and Dice Data: Use filters and groups to really dig into your data and get a deeper understanding.

- Log Custom Properties: You can log hundreds of different properties to get really detailed insights.

- Gather User Feedback: Track feedback from your end-users in real-time to improve their experience.

- Manage Prompts in Studio: Use the Studio workspace to collaborate with your team on prompts, try out different versions, and debug efficiently.

- Test in the Playground: Test out new prompts and models together in a shared workspace.

- Track Versions: Keep a history of your prompt changes and different versions.

- Deploy Prompts Easily: Deploy your prompt templates with just a single click for smooth integration.

- Quick Integration: Getting started is super fast – just 3 lines of code! You can integrate seamlessly using Python or TypeScript SDKs, or even OTEL traces from any programming language.

- Secure & Scalable Infrastructure: You can count on secure, scalable infrastructure that includes end-to-end encryption, role-based access controls, and strong data privacy measures.

Ultimately, HoneyHive makes sure your data is managed securely, evaluations are automated, you can integrate human feedback, and you have a collaborative workspace for developing and deploying AI models. It’s built for large-scale AI projects and offers dedicated support along with secure hosting options.

Related AI Tools

Discover more tools in similar categories that might interest you

ACCELQ

Accessibility Desk

Stay Updated with AI Tools

Get weekly updates on the latest AI tools, trends, and insights delivered to your inbox

Join 25,000+ AI enthusiasts. No spam, unsubscribe anytime.