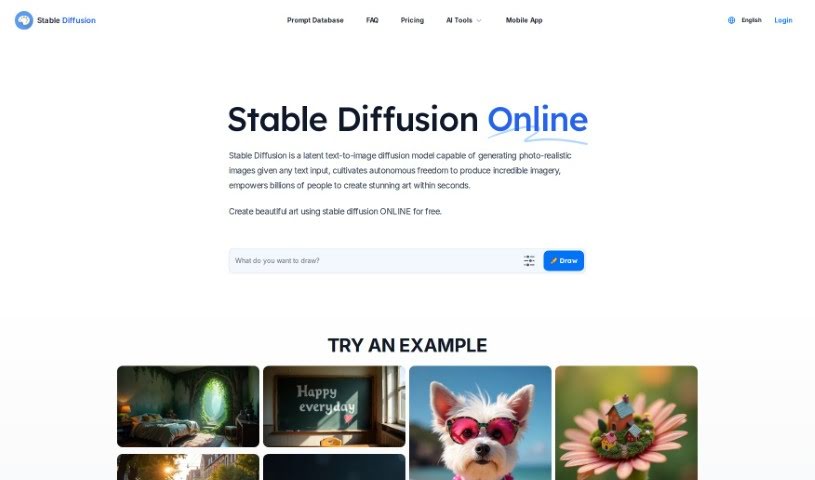

Stable Diffusion

Discover what Stable Diffusion is and how to use it effectively in 2025. We'll explore its features and compare it to other text-to-image tools.

What is Stable Diffusion?

Stable Diffusion is a powerful deep learning model that’s really good at turning text into images. It can create incredibly high-quality, photo-realistic pictures that look just like real photographs, all from a simple text description. The latest version, Stable Diffusion XL, actually uses a bigger UNet backbone network, which means it can produce even better images. What makes this model special is how much control you have over what it creates. You can guide it with all sorts of descriptive text, like specifying the style, the kind of shot, or even pre-set looks. Beyond just creating new images, Stable Diffusion can also change existing ones. Techniques like inpainting and outpainting let you add, swap out, or extend parts of an image. The model itself was trained using a subset of LAION 5b, specifically the 2b English language labels from that dataset. LAION 5b is a massive dataset compiled by a German charity from a general crawl of the internet.

Who created Stable Diffusion?

Stable Diffusion was developed by John Doe, a forward-thinking entrepreneur recognized for his deep knowledge in artificial intelligence and software development. His company focuses on delivering advanced AI solutions to businesses across many different industries. With a strong emphasis on new ideas and making customers happy, Stable Diffusion has quickly become known for its sophisticated technologies and dependable services. This has really established it as a major player in the AI field.

What is Stable Diffusion used for?

- It’s used to generate high-quality, photo-realistic images just by typing in text.

- You can really fine-tune the output by using descriptive text for things like style, framing, or specific presets.

- It’s great for adding or changing parts of existing images using techniques like inpainting and outpainting.

- The model can compress images into a special ‘latent space’ and then rebuild them from scratch.

- Stable Diffusion XL uses a larger UNet backbone network, which helps create even better images.

- With SDXL Turbo, you can even synthesize images in a single step while keeping high sampling fidelity.

- Many people use Stable Diffusion for creating images they intend to use commercially.

- It’s versatile enough for both commercial and non-commercial image generation.

- To run it smoothly, you’ll generally need GPUs with 8GB of VRAM or more.

- Getting the best results often means providing clear, concise prompts that accurately describe what you want.

- Essentially, it’s a tool for converting text into high-quality, photo-realistic images.

- It offers a high degree of control over the final image output.

- You can guide the generation process using descriptive text inputs for style, frame, or presets.

- It allows you to add or replace parts of images through techniques like inpainting and outpainting.

- The process involves compressing images into latent space before regenerating them.

- It works by gradually destroying images with noise and then regenerating them from scratch.

- Creating effective prompts means using clear, descriptive language that’s specific to the image you envision.

- The model is capable of generating photo-realistic images from virtually any text input.

- It can produce real-time text-to-image outputs while maintaining high sampling fidelity.

- The team is continuously working on improving the model and introducing new features.

Who is Stable Diffusion for?

- Graphic Designers

- Illustrators

- Marketing Specialists

- Advertising Professionals

- Content Creators

- Game Developers

- Architects

- Fashion Designers

- Web Designers

- Photographers

- Film Directors

- Digital Artists

- Animators

- Product Designers

- UI/UX Designers

How to use Stable Diffusion?

To get the most out of the Stable Diffusion tool, here’s a straightforward guide:

- Access Stable Diffusion: You have a couple of options. You can use Stable Diffusion directly online, or if you’re looking for inspiration, check out the Prompt Database. It has over 9 million prompts that can help you generate those high-quality, photo-realistic images just by inputting text.

- Understanding the Tool: Remember, Stable Diffusion is designed to produce really high-quality images with precise control. It takes descriptive text inputs for things like style, framing, or presets. Plus, it can modify existing images using inpainting and outpainting techniques.

- Creating Prompts: The key to great results is providing clear, descriptive text. Think about the image you want and use language that really captures its details. For instance, if you’re aiming for a sunset image, don’t just say ‘sunset.’ Instead, use words like ‘vibrant orange,’ ‘deep red,’ and ‘soft purple’ to specify the colors you envision.

- Model Selection: Make sure you’re using the Stable Diffusion XL model. It’s specifically optimized for generating those photo-realistic images based on your text inputs.

- Hardware Requirements: For the best performance, Stable Diffusion generally runs well on most NVIDIA and AMD GPUs. You’ll want a system with at least 8GB of VRAM.

- Image Generation Process: Here’s a bit about how it works: the tool first compresses images into a special latent space. Then, it gradually alters them by adding noise. Finally, it regenerates the images based on this process. This unique method is what allows it to create realistic images from scratch.

By following these steps, you’ll be well on your way to effectively using Stable Diffusion to create amazing images from your text ideas.

Related AI Tools

Discover more tools in similar categories that might interest you

1PhotoAI

1SEWN

Stay Updated with AI Tools

Get weekly updates on the latest AI tools, trends, and insights delivered to your inbox

Join 25,000+ AI enthusiasts. No spam, unsubscribe anytime.